Digital Civil Rights and Privacy: Embracing Digital Integrity

Published by Weisser Zwerg Blog on

Digital Integrity (Recht auf digitale Unversehrtheit) is a more comprehensive approach to what life in the digital age entails.

Rationale

Through my work with the nym mixnet and nymvpn, I discovered the growing Digital Integrity movement in Switzerland.

In some Swiss cantons - like Geneva - this principle has already been incorporated into constitutional law, highlighting that as our lives become more digital, our rights need to expand beyond traditional data protection.

In a 2018 statement[1], francophone data protection authorities led by the CNIL declared:

personal data are constituent elements of the human person, who therefore has inalienable rights over them.

The primary contribution of the “right to digital integrity” is that it takes a more comprehensive approach to what life in the digital age entails, going beyond the GDPR (General Data Protection Regulation, the EU’s most important data privacy law). What is at stake is not simply data protection, but the reality that many of us live substantial parts of our lives online - and these digital lives deserve protection to safeguard our autonomy. This autonomy implies that our digital integrity must not be violated.

At its core, Digital Integrity emphasizes that our online actions and data deserve the same respect and legal protection as our physical selves, ensuring autonomy, privacy, and security in the digital realm.

Recent articles - such as “Nach der Abstimmung in Genf: Was das Recht auf digitale Unversehrtheit bedeutet” - indicate that other Swiss cantons are also considering similar constitutional changes.

Von rachyandco - Vorlage:Own, CC BY-SA 4.0, Link

This blog post summarizes my thoughts on the right to Digital Integrity, what it means in our daily lives, and why it matters for everyone in our connected age.

Approach: Reframing Digital Integrity

Digital Integrity can seem like a broad, abstract idea. Therefore, we need a systematic framework to identify the opportunities (upsides) we want to enable and the threats (downsides) we aim to protect against.

Although the link between Digital Integrity and “Identity” (or more specific “Entity with Identity” (EwI)) might not be obvious at first, I encourage you to read through the entire story in the appendix. By the end of this discussion in the appendix, you will gain a clear, structured view of how identity connects to digital integrity - helping you see the analogy between our physical selves and our digital extensions.

Since I know many readers prefer the concise version, I will move forward with the main storyline. Yet I want to briefly highlight the connection between identity and digital integrity as follows:

Identity emerges from specific, complex configurations (states) bundled together with computational processes (what we might call “Turing machine heads” in computer science), and these processes link us to a continuous existence in time and space. Applying this understanding to the online world creates an analogy between our physical selves and our digital extensions of our selves from social media accounts to workplace collaboration tools.

Through this analogy we can understand why our digital extensions deserve the same autonomy and protection we expect for our physical selves.

Joining the Dots: Bridging Entity with Identity (EwI) and Digital Integrity

In this section, I want to deliver on my promise of a systematic approach that helps us see the opportunities we want to foster and the threats we aim to defend against, all through the lens of the “right to digital integrity”.

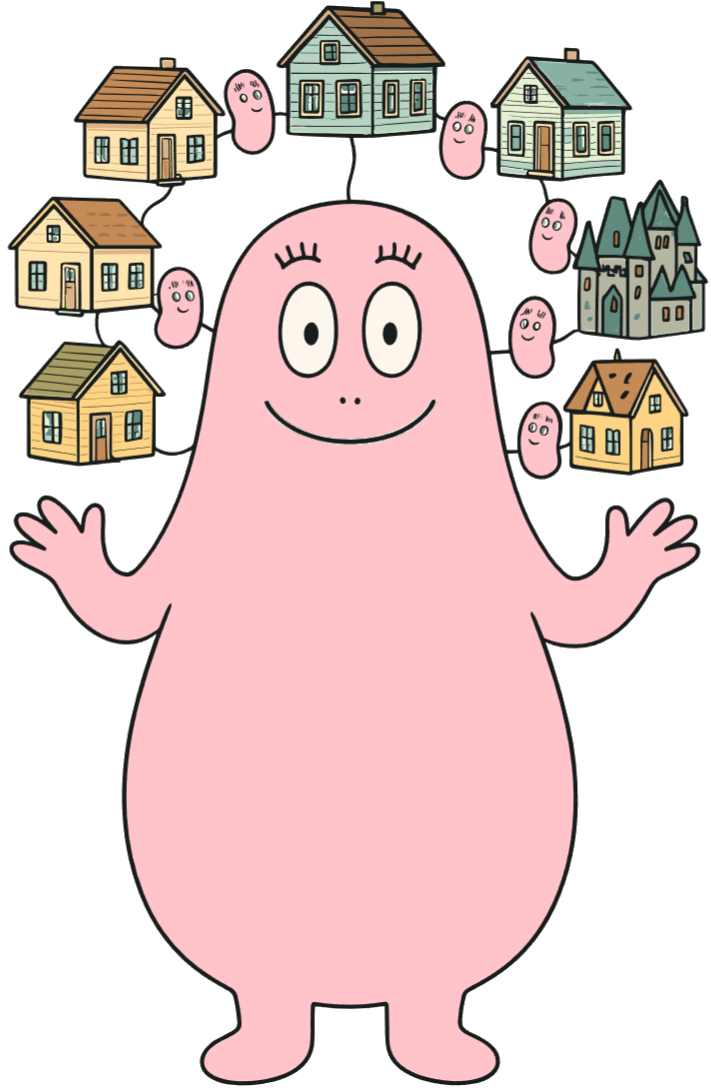

I’ll use a few illustrations to emphasize key points. You may or may not be familiar with Barbapapa, a 1970s children’s book whose title character (and “species”) can morph and reshape itself, and even split into multiple copies (similar to mitosis (cell-division) in biology).

Barbapapa’s shape-shifting powers are a useful metaphor for how our “digital selves”[3] can extend and transform in the online world.

Now, let’s look at the following illustration:

Imagine the large, central Barbapapa represents you as a person - a distinct, complex physical entity (a specific, complex physical configuration bundled together with your physical processes (biological cells, brain activity, …)). All the smaller Barbapapas radiating from your “head” symbolize the digital (complex) configurations you create and maintain in the online world.

Barbapapas can effortlessly change form or split into multiple copies. This is a playful analogy for how a single person can “branch out” into various online services and devices while remaining one cohesive individual.

Examples of these Digital “Extensions”:

- Configurations on Your Physical Devices

- Smartphones

- Laptops and computers

- Even connected home appliances

- Digital Accounts with Service Providers

- Your phone and internet service providers

- Social media platforms like Facebook

- E-commerce sites like Amazon

- Cloud-based services such as Office365

- Banking and financial platforms

Each of these systems can be viewed as an extension of your core self, much like sprouting additional arms would extend your physical abilities. These extensions often include their own processors (CPUs or other computing units), but you are still the driving force: just as your brain controls your arms, you orchestrate these digital tools.

By leveraging these digital extensions, you increase your power and reach - much like an additional set of arms would increase your power and reach.

The increase in power comes from two main benefits:

- Enhancing Your Thinking Processes

Digital tools (like search engines, data analytics, or even AI-based assistants) can supercharge how you gather information and solve problems. This is similar to using an excavator instead of a shovel:Humans have historically leveraged machines to extend their abilities well beyond what their biological bodies alone can do.

- Broadening Communication

Instead of being limited to your immediate surroundings, you can now connect in real time with people all over the globe. This development is remarkably modern in human history - just a few centuries ago, nearly all interactions happened face-to-face or took weeks of travel or slow correspondence.

What you aim for is to open yourself to connections that benefit you - friends, family, and professional contacts.

However, with these advantages come new vulnerabilities. Exposing your digital “extensions” to beneficial contacts - friends, family, colleagues - makes sense. But the same connectivity can bring you into contact with bad actors eager to exploit or deceive you. Therefore, your other goal is to protect yourself from those with malicious intentions.

Hence, the “right to digital integrity” focuses on ensuring that these vital digital extensions remain protected - maintaining your autonomy, privacy, security and safety both offline and online.

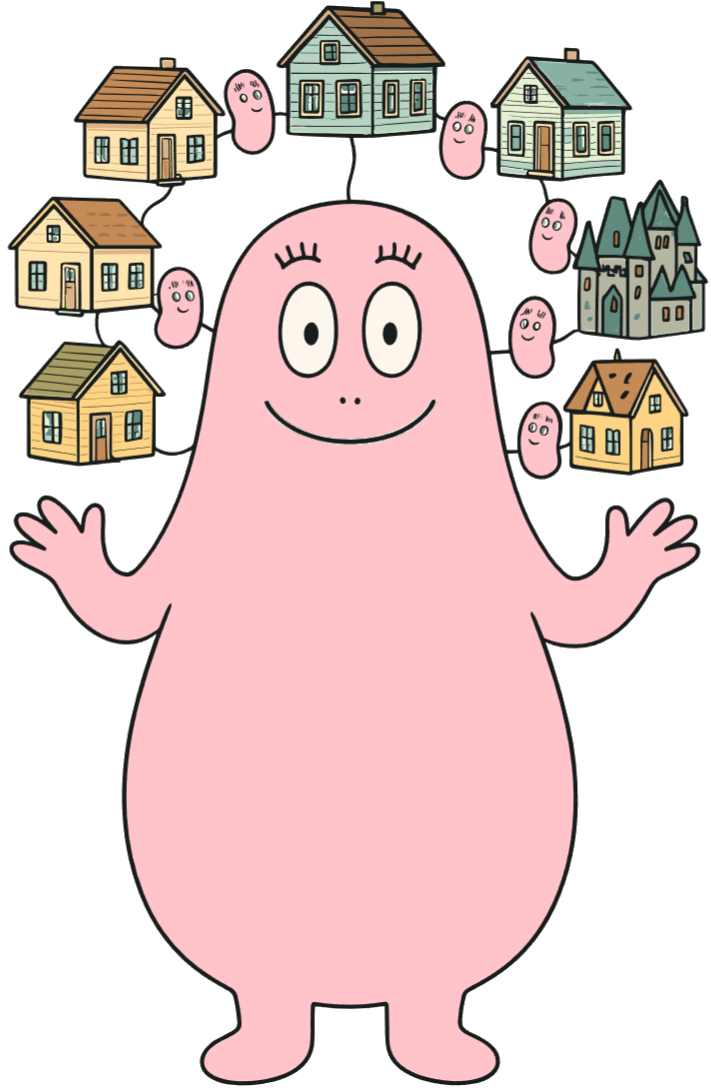

Your Digital Identities Live Under a Stranger’s Roof

Most people don’t realize a crucial fact about living in today’s digital world: most of your online “extensions” of self exist under someone else’s roof.

It’s obvious that your online accounts - whether for email, social media, or e-commerce - reside on servers controlled by third parties. But the same principle applies to your so-called “smart” devices: especially your smartphone, but also your internet-connected refrigerator, your increasingly computerized car, or even a future household robot. You may assume you truly own these gadgets - after all, you bought them - but you rarely control or fully understand their internal workings. Even highly technical users can’t verify that a device’s “smart” functions always act in their best interests. Modern operating systems are extremely opaque and complex, making it hard to know if they’re really “on your side” or on someone else’s side.

Why Ownership Can Be an Illusion

- Many “smart” devices come pre-installed with proprietary firmware or locked bootloaders, preventing you from examining their code.

- Even open-source components can be overshadowed by hidden hardware backdoors or vendor-supplied updates.

- Internet of Things (IoT) devices often send data to remote servers you have no visibility into, making it hard to confirm how your personal information is used or shared.

I urge you to pay the same careful attention to where your digital “extensions” live as you would if your children had to live under a stranger’s roof.

Look at the following illustration. It portrays how our “digital selves” fan out across various online services - figuratively living under strangers’ roofs.

[2:2]

[2:2]

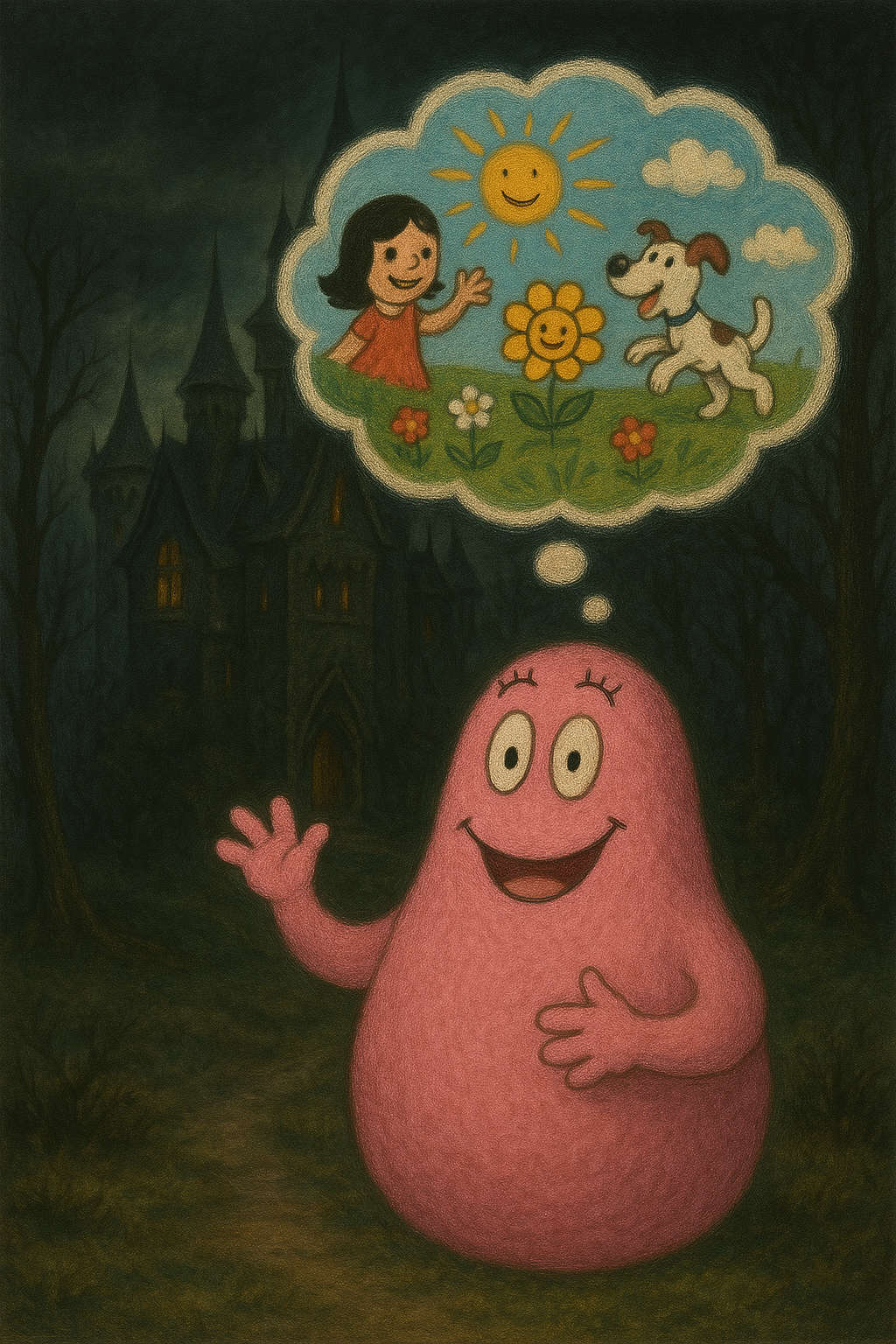

Many of these “homeowners” may be decent and well-intentioned:

[2:3]

[2:3]

But some could be malicious actors. If you could feel their cold, creeping, and calculated evil-spirited atmosphere and their hostility were obvious, you’d quickly move on.

[2:4]

[2:4]

However, true bad actors are usually skilled at deception. They often conceal their intentions behind a friendly facade so that you and your digital “extensions” stay under the impression that everything is safe:

[2:5]

[2:5]

In these deceptive environments, you can’t simply “pull back the curtain” because those systems are fully controlled by hostile forces - meticulously designed to hide their true nature from you.

Company Owner Analogy

I want to present another analogy to show the care you should take when your digital “extensions” reside in environments controlled by strangers.

Imagine you’re the owner of a company. Would you choose to move into a building owned and operated by your competitor, renting an open workspace with no way to lock up your confidential documents? And no way to keep your competitor’s employees from walking right behind you to watch what’s on your screen?

Now, consider an even worse scenario: instead of just a competitor - who might not always be on your side - you move into an open workspace owned by a company that specializes in corporate espionage. They offer low rent, cater to your convenience, and make you feel at home, but the longer you stay under their roof, the more they can exploit your activities and siphon off your intellectual property.

In practical digital terms, this is analogous to using services or devices that transmit and store your sensitive information on servers you don’t control. If these providers are untrustworthy, they can monitor your activities and data or share it with third parties. Even if they appear friendly, there’s no guarantee your information is safe without strong security measures like end-to-end encryption.

And even if you trust your current coworking space landlord, it remains a risky bet. Next year, they could sell their business or merge with someone else, and your information may end up under new ownership you didn’t bargain for.

Digital Integrity Threat Model

Below is an overview of the main threats that I believe the digital integrity movement should safeguard against. Recognizing these patterns is crucial for protecting our autonomy in an increasingly connected world.

- Denying Life-Cycle Events

Even though we may wish to let certain digital identities or past incidents “die”, some platforms or data processors refuse to allow information to be fully removed. This undermines the “right to forget”.- Old social media posts that cannot be deleted - ex-partners tagging you in embarrassing photos that you’ve repeatedly tried to remove.

- Online mugshot websites that refuse to delete outdated arrest records unless you pay a fee, even if charges were dropped.

- News outlets archiving minor youthful indiscretions, long after they stop being relevant or fair to display.

- America’s farmers are becoming prisoners to agriculture’s technological revolution by denying them the right to fix “their” tractors.

- Forcing Life-Cycle Events

Sometimes, you have no choice but to create an online account to access essential services, effectively “giving birth” to a new digital extension of yourself in a space that you cannot fully control.- Forced to open an account for train tickets when paper tickets are phased out.

- Pushing digital/electronic patient health records (ePA) despite serious concerns about privacy and security.

- Government e-services mandating an e-ID without providing a paper-based workaround - blurring the line between voluntary and coerced digital participation.

- In the future, Microsoft will only allow the installation of the operating system with a Microsoft account.

- Surveillance and Observation

Companies or agencies can construct a detailed “digital twin” - a psychological or behavioral model of you, like a “crash-test dummy” version of your psyche - through constant data collection. This “digital twin” is a profile or model of you built from data on your habits, preferences, and even emotional states. It can be used to predict or influence your behavior.- The Facebook–Cambridge Analytica data scandal, in which personal data was harvested and used to sway political choices.

- Smartphone apps tracking location and usage patterns continuously, creating a precise timeline of your activities.

- Email providers like GMX no longer letting users opt out of spam filters, meaning every message sent or received is scanned by their system.

- Microsoft’s “Recall” Project taking snapshots every five seconds so that “your” PC becomes a totalitarian surveillance machine.

- From iOS 18.2, Apple is preparing for the new “Onscreen Awareness” of the AI assistance system with its App Intents API so that Siri will know what users see in order to help them.

- The Cybertruck blast reveals the extent of Tesla’s sprawling surveillance capacity.

- Seduction

These tactics prey on your existing cravings, encouraging you to indulge in things you already find tempting - even if you know they are harmful - like pushing you to buy more sweets or subscribe to addictive services.- Being nudged to buy junk food repeatedly through food delivery apps offering “limited-time” discounts.

- Video game “loot boxes” designed to tempt players into spending more real money for random rewards.

- Subscription-based services bombarding you with free trials that automatically renew, luring you into long-term commitments.

- Nudging

Gently pushing you to do things you do not really want to do, e.g. to share more data or adopt behaviors you did not intend, often under the claim that it is for your own good.- Frequent pop-ups suggesting you enable constant location sharing to “improve your experience”.

- Auto-enrolled loyalty programs that require extra steps to opt out, steering you into sharing personal information.

- Frequent prompts to share more personal data "to improve the experience.

- Coercion

Using blackmail or the threat of disconnection to cut off your essential digital identities - like your bank accounts - to pressure you into compliance.- The practice, often called “debanking”, where you face sudden closure of your bank account without explanation affecting individuals with no criminal record or suspicious activity.

- Holding social media accounts hostage (e.g., “We will ban you permanently if you do not agree to revised terms of service”).

- Punitive measures in a digital ecosystem - like Microsoft’s new service agreement, which states all user content may be reviewed. This will lead to deletions and account suspensions and there are reports of journalists being suspended for language labeled “offensive” in news discussions.

- Backchannel Interference

Trying to reshape your core self at a deep level - similar to brainwashing - by altering your thoughts or emotional patterns in ways you did not consent to. These methods can range from highly manipulative ad campaigns to more subtle forms of psychological profiling intended to erode personal autonomy.- The Integrity Initiative using clandestine tactics to manipulate public opinion.

- Der hausgemachte Desinformationsskandal in the UK, exposing how state-run operations can covertly shape political discourse.

- Algorithmic “rabbit holes” (such as extreme content on video platforms) that can shift a user’s worldview over time through highly targeted content.

The threats above often overlap. Surveillance powers coercion; seduction can become nudging. Recognizing that they function within a larger ecosystem of digital risks helps you guard your autonomy and safeguard your digital integrity.

Remember:

“The cloud is just someone else’s computer.”

This threat model focuses specifically on personal digital extensions - our identities, data, and activities online. But it doesn’t even begin to address corporate-level risks, such as industrial espionage, where companies store their “crown jewels” (like trade secrets or intellectual property) on external cloud servers vulnerable to breaches.

Another overlooked area is the legal grey zone around data generated by precision agriculture. For example, sensor and satellite data from farms can be used in the commodities futures market - sometimes in ways that work against the interests of the farmers who produced it. If third parties use this data to predict crop yields and influence market prices, small-scale farmers may find themselves at a disadvantage, lacking access to the same insights or trading tools used by financial firms.

It’s becoming a recurring theme that we often finance the very systems that compromise our rights and autonomy - effectively paying for our own exploitation.

A common example is the “IoT ecosystem”, where buying connected devices (such as thermostats, speakers, or smart TVs) subsidizes ongoing data collection pipelines. This data is then used for targeted advertising, AI training, and profiling, which often feeds back into systems that further invade our personal spaces.

The result is that we end up bearing both the financial cost (by buying the hardware, paying monthly fees, or enduring intrusive ads) and the privacy cost (as our data is harvested and monetized) while these platforms reap the profits. These business models thrive on continued user engagement, effectively making us customers and the product.

Some final thoughts:

A digital “extension” of yourself becomes more valuable the more you customize it. That customization is synonymous to a richer specific, more complex configuration - like your smartphone with countless personal settings and apps. Migrating to a new device, even on the same platform, can be a major hassle.

Some digital “extensions” of yourself are critical for your economic well-being. Bank accounts are the most obvious example, but online marketplaces where you buy and sell goods, as well as professional platforms like LinkedIn, also belong in this category. Losing access to these essential services is akin to cutting off a physical limb, leaving you feeling handicapped in your daily life.

Some of these digital services are forced upon us - like electronic patient health records. In many healthcare systems, opting out can be cumbersome or practically impossible, raising concerns about data security and patient privacy. Meanwhile, old-fashioned paper sometimes still works better, and caring for these unwanted digital extensions can feel like looking after a poorly behaved stranger’s child.

Who actually benefits from this push toward digital-only solutions? Probably not you. It’s worth asking whether those who profit from such moves truly have your best interests at heart.

Opportunities We Want to Foster

We’re now going to explore the opportunities that encourage people to engage in the digital realm in the first place. By identifying these positive uses, we can design systems that are more resistant - or at least less vulnerable - to the digital integrity threats outlined earlier.

Broadly, these opportunities fall into two main categories:

- Enhancing Your Thinking or Information Gathering and Processing Capabilities

- Broadening Communication, where you expose yourself to people who benefit your personal or professional life.

The lists below are by no means complete, but they offer practical examples of how digital tools and services can benefit us.

- Enhancing Your Thinking or Information Gathering and Processing Capabilities

- Computers (Laptops, Tablets, Smartphones) with their operating systems: These devices serve as our primary interfaces to the digital world.

- Office / Personal Information Management (Calendar, Address Book, etc.): Tools that keep track of appointments, contacts, and tasks all in one place.

- Web Browsers: Gateways to the internet, enabling research, entertainment, and communication.

- Home Automation: From smart thermostats to connected lighting systems - provides convenience and data analysis opportunities in your home.

- Search Engines: Essential for research, problem-solving, and staying informed.

- (Price) Comparison Sites: Useful for finding deals or comparing product features.

- Artificial Intelligence (AI), including Large Language Models: AI-driven tools can answer questions, summarize text, or even generate code.

- Endpoint Detection and Response (EDR) / AntiVirus: Helps monitor for security threats and protect your system from viruses or malware.

- Integrated Development Environments (IDEs): Streamline coding and debugging, boosting productivity for developers.

- Broadening Communication

- Base Layer: Internet Service Provider / Telco: Your fundamental connection to the online world.

- 1-to-1 Communication

- Messengers: such as WhatsApp, Telegram, Signal, Threema, etc.

- Bank Accounts or Crypto Wallets: for direct financial transactions.

- 1-to-Many Communication:

- Bi-Directional Platforms

- Marketplaces (e.g., Amazon, LinkedIn)

- Uni-Directional Platforms

- Professional Publishing (e.g., online newspapers)

- DIY Publishing (e.g., WordPress, personal blogs)

- Medium

- YouTube

- Bi-Directional Platforms

By focusing on these two broad categories - enhanced cognitive capabilities and expanded communication - we can harness digital tools in ways that respect and protect our digital integrity.

Fostering Opportunities with Digital Integrity

Now that we have an overview of the opportunities drawing people into the digital realm - and an understanding of the threats outlined in our digital integrity threat model - we can begin formulating political objectives that protect us while preserving our freedom to explore the digital world.

Denying or Forcing Life-Cycle Events

The two main ways we can counteract the denying or forcing of life-cycle events are:

- Establishing a legally protected fundamental right to an analog life.

- Classifying critical digital services as utilities to guarantee fair access and public oversight.

Fundamental Right to an Analog Life

The fundamental right to an analog life advocates for individuals to engage in society without mandatory reliance on digital technologies. This principle asserts that essential service providers must offer non-digital avenues - such as in-person assistance, paper-based communications, or telephone support - to ensure accessibility for all, including those without digital access or proficiency.

Beyond inclusivity, maintaining analog options enhances organizational resilience. In crises like natural disasters or cyberattacks, digital systems may falter; analog alternatives serve as critical backups, ensuring uninterrupted service delivery. This redundancy is vital for business continuity, safeguarding operations, preserving customer trust, and ensuring compliance with regulatory standards during unforeseen disruptions.

Classifying Critical Digital Services as Utilities

Utilities such as water, electricity, and gas are generally considered essential services due to their fundamental role in ensuring public health, safety, and welfare. Because these services are indispensable to both individual households and entire communities, they are often viewed not merely as consumer goods or conveniences but as necessary conditions for a standard quality of life. The nature of utilities, therefore, extends beyond ordinary market transactions, since a lack of access can severely impact individual or community well-being and interests.

This recognition of utilities as critical resources underpins their different treatment in the law. To safeguard equitable access and guarantee consistent provision, governments and regulatory bodies impose specific rules and oversight that might include rate regulations, service obligations, and monopoly protections balanced with strict accountability. Unlike other commercial services, providers of utilities frequently operate under heightened scrutiny to prevent service interruptions, unaffordable price increases, or exploitative business practices, reflecting the broader public interest these services embody.

By that logic, digital services critical to daily life should get similar legal treatment.

Surveillance and Observation, Seduction, Nudging, Coercion and Backchannel Interference

Legal Objectives

In my view, a core set of political objectives should drive the digital integrity movement:

- Strong Ownership Guarantees for Your Devices: Treat your smart devices - be it your smartphone, car, or even a household robot - with the same absolute control you have over your own body.

- At a minimum: Physical Off Switches: Require all devices with audio/video or tracking sensors to include a hardware-based “kill switch”. This should cut connection at the physical level - battery included - so users can be certain their phone, computer and sensors are truly off.

- Extending “Inviolability or Sanctity of the Home” to Digital Spaces: Apply the legal concept of “inviolability of the home” to digital realms. No entity (government or private) may intrude into your personal digital environment without proper legal authority.

- Ban on Building Detailed Psychological/Behavioral Models: Forbid the creation of highly personalized models used to predict or influence an individual’s psyche.

- Ban on Behavioral “Dark Arts” and Mass Manipulation: Outlaw large-scale psychological targeting or “mass formation” techniques.

Penalize violations harshly - whether by private companies or government agencies.

Strong Ownership Guarantees in the Context of Digital Integrity

Strong ownership guarantees empower individuals to fully control the devices they purchase and use.

Imagine if the guarantees you have over your own body parts could be taken away at any moment or restricted by someone else. That prospect alone would be frightening - we want to ensure our bodies respond only to our own will. In a digitally connected age we must consider our smartphones, laptops, and other “smart” devices as digital extensions of ourselves. Without robust legal and technical safeguards ensuring they respond only to our will, we risk surrendering control over essential parts of our lives.

When we talk about “strong ownership guarantees”, we mean more than just being able to fix a broken screen or replace a battery. It demands more than mere data protection. We mean a comprehensive, irrevocable right to decide how, when, why, and precisely what our devices do - ensuring no hidden or involuntary features run in the background. This principle lies at the core of the right to digital integrity and it requires that our digital existence remain under our control rather than the manufacturer’s. Achieving this vision needs both legal and technical approaches.

Legally, this could mandate that owners always retain full authority over hardware and software settings, prohibit forced updates that remove user functionalities, and ensure the freedom to operate devices offline or self-host key services.

- Device owners retain full authority over hardware and software[4] operations.

- No forced updates can be administered that remove or restrict user functionalities.

- Right to Offline Mode: Users must be legally allowed to operate their devices with no mandatory connection to a vendor-controlled network.

- Right to Self-Host Services: Users can replace cloud-based vendor services (e.g., voice assistants, data analytics) with local solutions they control.

On the technical side, well-intentioned laws are useless if devices come loaded with undisclosed backdoors or forced cloud dependencies. Manufacturers must include a physical kill switch for cameras, microphones, and location sensors; offer a verifiable offline state that doesn’t rely solely on software checks; design modular, repairable parts; allow local data processing; and refrain from implementing anti-repair mechanisms.

- Physical Kill Switch: All devices with cameras, microphones, or location sensors must include a hardware-based switch that cuts off power at the circuit level.

- Verifiable Offline State: Users must be able to confirm whether a device or its sensors are offline, ideally based on “analog mechanisms” so that you don’t need to rely on analysing software to guarantee its proper functioning.

- Modular and Repairable Design: Components like storage modules, batteries, or sensor arrays should be replaceable or upgradeable by the owner (or authorized repair shops).

- Freedom to Self-Manage Data Processing: Features such as voice recognition, AI, or data analytics should be operable locally without sending data to the manufacturer’s servers.

- No Anti-Repair Mechanisms: Manufacturers must not implement hardware or software locks to prevent owners from opening, fixing, or modifying their devices.

Violations should incur firm penalties - from heavy fines to product bans - to deter any disregard for user rights. By enforcing these standards, we send a clear message: your device is yours. Much like you decide how to move your arms or legs, you alone decide how and when your devices operate. This principle is central to digital integrity.

For instance, a farmer with a sensor-equipped tractor should be free to disconnect unneeded connectivity, replace parts at will, or run cloud-based features on a local server - without worrying that the manufacturer will penalize them legally or technically.

By requiring manufacturers to adopt these user-centric designs, we uphold the promise of digital integrity: that technology exists to serve our goals, transparently and on our own terms. When you can truly switch off connections, confirm device behaviors, and handle data locally, you feel safe exploring innovations.

Inviolability of the Home (Digital Edition)

The core idea behind the “inviolability” or “sanctity” of the home is that your home is a protected private space. This principle should also apply to the “homes” of your digital extensions. Here are two illustrations that visualize this concept:

[2:6]

[2:6]

Drawing on the English common law principle that “a man’s home is his castle”, we can extend its logic to digital spaces. In practice:

- No Entry Without a Warrant

- Police Must Knock and Announce

- Highest Expectation of Privacy

Translating these principles into the digital sphere defends our core autonomy. Any attempts to access the digital homes our digital extensions inhabit - whether by service providers, law enforcement, or other authorities - should be subject to the same high legal standards and protective procedures that apply to physical property.

Why Banning Psychological Models Makes Dark Arts Harder

If entities can no longer build comprehensive models of your psyche, it drastically limits targeted mind manipulation. They would have to use broader statistical approaches, which are easier for observers (including regulators) to detect and classify as harmful. This drop in precision means fewer people end up caught in hyper-personalized campaigns before they realize what’s happening - or before someone can warn them.

This increased visibility should not be underestimated. Take a look at the America’s Digital Shield project and how much effort it requires to hold major social media and data corporations responsible for their actions.

The Threat of Cognitive Warfare

The new branch of arms called cognitive warfare drives the weaponization of social sciences like cultural anthropologists, economists, psychologists, who design schemes to fight over us. Cognitive warfare uses social sciences to fight us as if we are simply terrain.

I’m convinced we can develop algorithms to detect and highlight campaigns that use these manipulative tactics. Instead of removing the identified content, we could tag it with a detailed, machine-generated explanation of the techniques involved. This would allow the people affected to file lawsuits for damages and hold those responsible accountable.

For obvious reasons, banning these manipulative practices is not enough on its own. Foreign state actors or shadowy groups might ignore such bans or operate outside any enforceable jurisdiction. Thus, personal vigilance and robust defense measures become just as crucial as legal prohibitions.

Tracking

Surveillance, seduction, nudging, coercion, and backchannel interference all hinge on collecting detailed data to construct a personalized “digital twin” of your psyche. Stopping or reducing this data flow makes it much harder to manipulate you.

Trusting Your Own Devices

Securing your digital life starts with the devices you actually own. The first step is to understand what data they send - and to whom. Ideally, you’d aim at minimizing the cloud services integrated deep within your devices’ systems. When you use a dedicated cloud-based app, at least you are aware you’re relying on external servers. Far more concerning are those hidden or deeply embedded services you may never notice.

For instance, endpoint security tools like Endpoint Detection and Response (EDR) / AntiVirus can monitor your every keystroke or file operation. While their goal is to protect you, they also introduce a powerful surveillance channel if they’re misused or compromised.

Below are just a few examples of hidden or low-visibility data flows in modern systems:

- Automatic Operating System (OS) Updates

- Autocorrection/Prediction in Virtual Keyboards

- Microsoft’s “Recall” Project: Snapshots are taken every five seconds when the content on the screen is different from the previous snapshot. … The PC thus becomes a totalitarian surveillance machine.

- App Intents API: From iOS 18.2, Apple is preparing for the new “Onscreen Awareness” of the AI assistance system. In the future, Siri will know what users see in order to help them.

- Biometric Identity Recognition (face, voice, etc.)

- Built-in Digital Wallets

- Location-Based Services (e.g., “Find My iPhone”, “Find My Friends”)

Each of these features can stream data back to vendors or third parties - potentially exposing your personal life to unknown entities.

Physical Camera Covers and Kill Switches

A straightforward question: Why do most laptops, tablets, and phones lack a simple hard lens cover or physical kill switch for the camera or microphone? It would add just about 1.5 cents per unit, yet you still can’t buy a device with a built-in opaque cover. This is why it should be mandatory for devices to include a hardware switch that cuts power at the circuit level (including the battery if needed). With hardware physically turned off, neither hackers nor hidden processes can spy on you.

Freedom of Expression

Freedom of expression is the fundamental right to share your thoughts, beliefs, and opinions whether political, religious, or personal - without fear of censorship, punishment, or social or professional backlash. It means being able to speak your mind, create art, practice your faith, or engage in peaceful protest without worrying about being silenced, “canceled”, or penalized simply because your views are unpopular or controversial.

This freedom is essential in a society where ideas can be debated openly, and individuals are not forced to conform out of fear. Protecting freedom of expression ensures that people from all backgrounds can participate in public life without being excluded or disadvantaged for what they believe.

One straightforward way to protect freedom of expression - without fearing that you will be silenced or penalized - is to allow anonymous interaction in the digital world.

Anonymous voting is a typical minority right to guarantee that people can cast their votes freely and as such is a fundamental mechanism that helps preserve the integrity of the democratic process. By allowing individuals to cast their ballots without revealing their identities, it safeguards their ability to make choices based on personal convictions rather than external pressures or fears of reprisal. In this way, anonymous voting serves as an essential minority right, reinforcing democracy’s core principle that every individual’s opinion holds weight and must be respected.

Of course, this anonymity would have to work within legal frameworks (for instance, ensuring taxes are paid and preventing violations of others’ rights). However, Zero-Knowledge Proofs (ZKPs) could enable both anonymity and support for other legal goals.

Zero-Knowledge Proofs (ZKPs) are cryptographic techniques that allow one party to prove certain facts to another - such as age, citizenship, or identity - without revealing any underlying personal data.

Just to give a few examples:

- You might have a limit on the number of words you can send anonymously per month. In addition, you could prove (using a zero-knowledge proof) that you are a real human, over 18, and a citizen of a certain country if you want to participate in political discussions.

- You could also have a spending limit (e.g., in euros) on how much you can donate anonymously to support political parties, churches, or other organizations. Control could be placed on the receiving side - groups banned by a court order could be excluded from receiving contributions.

Although more research is required to implement these systems, they appear realistically possible. As the saying goes, “Someone who truly wants something finds a way; someone who does not want something finds reasons”.

Further aspects to keep in mind are:

-

Importance of End-to-End Encryption

End-to-end encryption is crucial for preserving anonymity and free expression online. It ensures that your messages, emails, and other digital communications are only readable by the intended recipients - and not by third parties, internet service providers, or government agencies. This layer of protection becomes even more significant when paired with zero-knowledge proofs, since the technical strength of the encryption shields user data while the proof allows for regulatory compliance. Without end-to-end encryption, sensitive information can be intercepted, potentially leading to self-censorship or fear of being monitored, which undermines free speech and personal autonomy. -

Integrating Self-Sovereign Identity and Verifiable Credentials

Self-Sovereign Identity (SSI) systems, which use verifiable credentials, let individuals prove attributes like age or citizenship without revealing their full identity. For example, you can show you’re over 18 without sharing your birthday or other personal details, thanks to zero-knowledge proofs. This aligns well with the idea of digital integrity: you remain in control of what information you disclose, effectively balancing freedom of expression with accountability. When anonymity is necessary - such as whistleblowing or participating in political debates - these technologies allow you to share what’s legally required while keeping your identity safe.

Level Playing Fields

We need a regulatory requirement for structural neutrality in our digital bill of rights. This requirement applies to the places where we try to make sense of the world - whether online or offline.

Structural neutrality parallels the philosophical principle of universality. It ensures that, for all similarly situated individuals - regardless of culture, race, sex, religion, nationality, sexual orientation, gender identity, or any other distinguishing feature - the “rules of the game” remain the same. This principle is why the Roman goddess Justitia is depicted with a blindfold, symbolizing that justice should be applied impartially, without regard to wealth, power, or any other status.

The “places in which we try to make sense of the world” can be thought of as marketplaces of ideas, such as universities, research institutions, and social media platforms. These are the environments in which we discuss, debate, and explore different perspectives.

Algorithms, however, must not be designed to systematically target or punish certain people or ideas. Otherwise, we end up in a loop like this:

- You’re a bad person because you are spreading misinformation.

- How do we know it’s misinformation?

- Because it violates the scientific consensus[5].

- How do we know what the scientific consensus really is?

- Because all these scientists are saying the same thing.

- Yes, but you’ve just told me you target anyone who strays from that consensus. So I have no way of knowing what the consensus would look like otherwise!

If we punish people just for disagreeing, we create an echo chamber. We never truly discover if there’s a different perspective or a new insight that could enrich our understanding.

The basic point is that level playing fields are the sine qua non of the modern world. There’s a reason this principle is so foundational and it’s about the best we’re ever going to do: letting competition unfold on a level playing field works in science, politics, and markets. It works across everything. It’s the best way to let people figure out what’s true, in a system that isn’t biased from the start.

In the realm of computer science, “algorithmic fairness” explores how automated decision-making can inadvertently create or reinforce biases. Ensuring structural neutrality means designing algorithms so that all users - and their ideas - are handled equally, without hidden or discriminatory filters.

In summary that is why we need a regulatory requirement for structural neutrality in our digital bill of rights, particularly for the algorithms governing our marketplaces of ideas.

Conclusion

As our lives and identities increasingly extend into the digital realm, the concept of digital integrity becomes more important than ever. We have seen how identity emerges from specific, complex configurations bundled together with computational processes (“Turing machine heads”), and how those processes tether us to time-spatial continuity. Applying this understanding to the online world creates an analogy between our physical selves and our digital extensions of our selves from social media accounts to workplace collaboration tools.

Through this analogy we see why our digital extensions deserve the same autonomy and protection we expect for our physical selves.

It’s also critical to recognize that many of our digital identities “live under a stranger’s roof”.

[2:8]

[2:8]

In other words, our vital online accounts often reside on servers owned by companies or individuals with their own agendas.

Most people are unaware of just how much control these outside parties have.

As with entrusting your children to live under a stranger’s roof, we should pay close attention to where our digital identities really “live”.

Think of a company owner who sets up shop in a workspace owned by a competitor specializing in corporate espionage - clearly a poor choice.

The same principle applies to our digital selves: if the host’s interests are misaligned with yours, your personal data and autonomy may very well be at risk.

We also explored the many threats that undermine digital integrity, from forced or denied lifecycle events (like being unable to delete data or being compelled to open new accounts) through surveillance, seduction, nudging, and even “backchannel interference”. Recognizing these dangers shows us that digital integrity is more than just data protection; it’s about safeguarding the autonomy, agency, and well-being of our “digital selves”.

To shield ourselves, we discussed robust legal principles (like the right to an analog life, or considering essential digital services as utilities) and practical safeguards (like hardware kill switches). At the same time, we emphasized the opportunities that make the digital world so exciting: the ability to expand our thinking, our communication, and our reach in ways never before possible.

My hope is that this perspective - grounded in a systematic view of identity as rooted in physical processes and physical continuity - will prompt more focused and holistic thinking on the topic of digital integrity. In a world where digital interactions have such profound personal and societal ramifications, we owe it to ourselves to become more deliberate stewards of our online presence. By doing so, we can embrace the full potential of the digital age while remaining vigilant guardians of our autonomy, privacy, and fundamental rights.

Appendix

Identity

To understand my perspective on digital integrity, I need to first explore the concept of identity - or “entity-with-identity” (ewi).

This shorthand “ewi” simply means any entity[6] that can be distinguished from others, whether it’s a person, an organization, or a digital node on a network[7].

To grasp how identity arises, it helps to start with the smallest building blocks. When we look at the world at subatomic scales - elementary particles such as electrons, quarks, and photons - we notice that individual identity does not exist. These particles are essentially indistinguishable from one another. One electron is the same as another; they do not have any built-in identity. Their fundamental properties (charge, mass, spin) are universal. If you swap one electron with another, there is no discernible difference.

- We can’t paint an electron green to track it through space.

- We can’t pin down its exact position without disturbing its state.

- There is simply no “name tag” for an electron.

And yet, at higher levels of organization - such as molecules, cells, rivers, cats, people, or even entire ecosystems - we clearly see unique forms that are difficult to replicate perfectly. Their intricate details make each configuration distinct from all others. So where does this identity come from?

Why does identity seem to vanish at the particle level but become undeniable at more complex levels? Some philosophers have proposed “narrative” or “psychological continuity” as explanations[8]. Instead, I’ll focus on ideas rooted in physical processes and physical continuity.

Fungibility vs. Identity

To better understand identity, it helps to contrast it with its opposite: fungibility (or interchangeability). From fully fungible objects to unique entities with their own histories, there’s a continuum that includes many “in-between” cases.

- Fungible Objects: If you need a liter of water, any liter with the right purity and temperature will do. You don’t track which specific water molecules you have because they’re all effectively the same.

- In finance, for example, cash is usually considered fungible - one dollar is as good as another.

- Pseudo Fungibility: In the real world, we often treat objects as if they were interchangeable for the sake of convenience. Consider identical screws in a hardware store or a carton of eggs. Each egg might have tiny differences - variations in shell thickness or internal composition - but for practical purposes, we overlook those details and treat them as if they’re all the same.

- Entities with Identity: For complex objects - like your cat, your phone, or a crucial database - the arrangement of their internal components is so intricate that you can’t simply replace them with a perfect copy. Their physical or structural makeup is so detailed that replicating it is extremely difficult, if not impossible. Once you care about a specific object’s unique characteristics, that object’s identity solidifies.

- In digital contexts, “entities with identity” can also refer to user accounts, blockchains, or data sets that have irreplaceable records - like non-fungible tokens (NFTs), which represent digital assets that can’t be swapped one-for-one.

Let’s look at some examples:

- Consider a small, “throwaway” database you spun up to test a software idea. Initially, you might see it as fungible - interchangeable - and discardable. You don’t bother with backups or replication. But over time, you store more and more critical information in it, and soon entire teams depend on it being there, always up to date. It becomes indispensable. Backups become huge, real-time replication is challenging, and the system can’t simply be replaced with a fresh install. Its complexity and importance give it a distinct identity.

- Similarly, think about a house or a beloved pet. Each has a complex configuration - whether that’s wood, wiring, insulation, or bones, tissues, and behaviors - that is not so easily swapped out for another. That complexity makes them stand out. In daily life, we assign them identity - we give the house an address and the pet a name - because these specific configurations matter to us. We don’t treat them as casually replaceable objects, which is why we recognize them as having a unique identity.

So, one key aspect of identity is a complex configuration that’s hard to replicate. This applies even to digital “objects”, because all digital data always ultimately boils down to physical storage - whether it’s flip-flops wired into larger Random-Access Memory (RAM), magnetic domains on a disk drive, optical structures on a CD, or even patterns of neuronal connections in the human brain.

But physical structure alone isn’t enough. Data by itself is inert (it stays dormant until something interprets or executes it). Even if you embrace concepts like code as data (sometimes phrased as “code is data is code”), you still need an active mechanism to interpret or execute that code. In computer science terms, this mechanism is a “Turing machine head” - a physical process that reads and manipulates data, making it useful.

The Role of Physical Processes

A Turing machine head is the key element of a Turing machine where “the rubber meets the road”, so to say. A Turing machine head is the place where a theoretical “tape” of data interacts with a physical reader/writer mechanism. The Turing machine is a fundamental model of computation. It’s considered maximally powerful in that any computable function can be carried out by some form of Turing machine.

A system is called Turing-complete if it can simulate any Turing machine. According to the Church-Turing thesis, any algorithmically describable process can be emulated by a Turing machine, underscoring its status as a universal model of computation.

What I want to emphasize here is that this maximally powerful universal model of computation - anchored by the Turing machine head - provides a solid way to conceptualize physical processes that read and manipulate data. Thus, the Turing machine is not just a theoretical idea but also the practical framework for modeling any data-processing activity, digital or otherwise, within the limits of computation.

Spatial Locality

When we combine two key ideas - (1) that identity arises from a specific, complex configuration, and (2) that all data-processing occurs via a “Turing machine head” - we arrive at a crucial conclusion: every entity-with-identity is spatially localized and connected.

For living beings, this is clear: each organism (from a single cell to a giant redwood) occupies a defined region in space. Biological cells function like tiny Turing machines, exchanging chemical or electrical signals that can’t cross large distances instantly and therefore must stay in relatively close proximity. Signals are bound by physical constraints (such as diffusion rates for chemicals, or the finite speed of nerve impulses). In other words, “reliable, timely communication” inherently implies a limit on how far apart these cells can be if they are to stay in sync.

Because no signal can exceed the speed of light, low-latency coordination in the digital world also depends on proximity. While “dead data” (static files stored anywhere) can be easily spread across the globe without harming a system’s overall integrity, “active” data needs to stay close (in network terms) to a Turing machine head (like a server’s CPU) for real-time operations. Whenever we want to perform computations, respond to queries, or provide services, data chunks must interact quickly with a localized processor. Speed-of-light constraints effectively translate “timely communication” into physical proximity.

Therefore, even systems intentionally built to be distributed - like databases, container orchestration platforms, or blockchains still rely on strong connectivity and synchronization to function as one coherent entity. A globally dispersed server cluster must maintain frequent updates to remain one cohesive unit. Whenever communication slows, the system risks splitting into separate parts. The greater the distance, the harder it is to maintain high-speed back-and-forth updates without risking partitions or data inconsistencies.

Thus, from cells in biology to nodes in a database, continuous, close interaction underpins a shared identity - be it a living creature or a resilient digital system.

Ultimately, identity depends on continuous, localized interaction. “Distributed” does not mean “disconnected”. It simply describes how numerous localized processes can weave together, through strong connectivity and synchronization, to maintain a cohesive whole: an entity with both integrity and identity.

Naming and References

In the context of Rich Hickey’s conceptual framework of values[7:1], a “value” is a timeless, immutable piece of data. A simple example is the integer 8 - my 8 is the same as your 8 because there is no way to distinguish them.

Further Background:

To understand the difference between immutable data (“values”) and entities-with-identity that have their own lifecycle and state, Rich Hickey’s talk on The Value of Values offers a deeper exploration.

When we talk about specific, complex configurations - whether they’re physical objects, digital systems, or living organisms - we need a way to refer to them unambiguously. In contrast to immutable values, an entity-with-identity (ewi) is dynamic; it occupies space and often changes over time, which makes it unique in ways a purely abstract value is not.

In functional programming, “values” never change once created; they’re like mathematical truths. An entity-with-identity, however, can undergo updates or transformations while remaining the “same” entity. For instance, a server that stores and processes data might evolve - software updates, hardware replacements - yet it retains its core identity in the network.

Names - or references - serve as our shortcut for pointing to these unique, identifiable configurations. Your cat has a name, your house has a physical address, and a database is reached via a connection string. In each case, the name is a compact label for a particular, non-fungible entity.

Earlier, we established that any entity-with-identity occupies a connected spatial extent or volume. One practical way to uniquely reference such an entity is by using a three-dimensional coordinate along with a specific moment in time. After all, two distinct entities cannot occupy exactly the same 3D coordinates at the exact same instant.

However, this reference scheme isn’t unique in the sense that multiple coordinates at a given moment in time may point to the same entity, because entities-with-identity occupy a volume and cannot be reduced to a single coordinate point. But crucially, only one entity-with-identity can exist at any given set of 3D coordinates at any given time.

Lifecycle Events

Lifecycle events are best illustrated by companies.

Companies are a clear example of entities-with-identity. Their legal identity is distinct from the individuals who work there, yet they are still physically anchored in the real world. The “3D coordinates” of a company could be its office location, the data centers that host its applications, or even the physical servers storing its financial records. These real-world anchors illustrate how - even though a company is often viewed as an abstract “legal person” - it ultimately ties back to tangible infrastructure and people.

Just like living organisms, companies pass through key lifecycle phases that reflect changes in both their identity and physical (or digital) presence:

- Birth (Incorporation): A company begins its existence at a specific point in time - usually upon formal registration or chartering.

- Mergers and Acquisitions (M&A): Multiple companies can combine into a larger organization, or one can absorb another. This process changes corporate structures, data flows, and overall identity.

- Spin-Offs: A company may separate part of its operations into a new, independent entity - creating a “child” organization with its own identity and lifecycle.

- Renaming/Rebranding: An existing company might adopt a new name or brand identity, which can shift how it is perceived and how it manages existing contracts, digital assets, and user data.

- Restructuring/Reorganization: Companies often reorganize their operations or legal structure (e.g., changing from a partnership to a corporation). This can affect data governance responsibilities, internal hierarchies, and security policies.

- Bankruptcy or Insolvency: When a company can no longer meet its financial obligations, it might enter bankruptcy proceedings. Data - like customer information—can become an asset that is sold or transferred.

- Relocation or Expansion: Moving operations to a new physical site or opening new branches.

- Death (Dissolution): Companies may cease operations, liquidate their assets, and dissolve, ending their formal existence.

By understanding these lifecycle phases, we can better appreciate how an entity-with-identity may evolve over time.

Time-Spatial Continuity

Having considered how physical processes and spatial localization underpin identity, we can now offer a more concise definition of an “entity-with-identity” (ewi):

An entity-with-identity is defined by its unbroken continuity through space and time, as a coherent bundle of physical processes (i.e., Turing machine heads) closely connected to specific, complex physical configurations (e.g. data or state).

This continuity means that an entity-with-identity traces a path - sometimes called a world line - through four dimensions: the three dimensions of space plus time. Outside of major lifecycle events (e.g., birth, death, merger, fission), it remains trackable via an uninterrupted trajectory.

A real-world entity-with-identity (such as a cat) is a 3D object in space and it exists across time, creating a kind of 4D trajectory. For example:

- At 10:00 a.m., the cat is in the kitchen.

- At 10:05 a.m., it may be on the couch.

- At 2:00 p.m. (four months later), it’s still around - maybe older, maybe heavier - but we say it’s “the same cat”.

Even though its atoms are constantly being replaced (metabolism, shedding fur, etc.), there is no magical teleportation or spontaneous multiplication. Instead, we can follow its 3D path through time, observing that today’s cat is physically connected to the cat from four months ago. That unbroken path is what we point to when we say, “Yes, it’s still the same cat”.

Philosophically, this ties into the famous saying by Heraclitus, “No man ever steps in the same river twice”. Both the man and the river are flowing, changing. But, from a practical perspective, we can still talk about “the Danube River” because it retains a certain continuity, even if individual water molecules come and go. Likewise, each one of us is a continuous, living process in time - a 4D path - so we say we remain “the same person” even though our cells are constantly replaced.

This paradox underscores that while change is constant, continuity is what sustains identity. A river has a particular channel of flowing water molecules, and a person has a specific physical organism that evolves yet remains connected through time. We label these entities “the same” because their unbroken chain of space-time continuity holds them together as recognizable wholes.

Hence, time-spatial continuity - the consistent linking of an entity’s present state (= specific, complex physical configuration) to its past - forms the bedrock of identity, both in the physical world and in our increasingly digital environments.

Digital Identity and Identity Management

As I mentioned in the Naming and References section, whenever we deal with entities with identity (specific, complex configurations), we need a clear way to identify them. That’s why we use references - think of them as long strings of characters that uniquely pinpoint these configurations.

In the digital world, we typically rely on surrogate keys to represent human users within computer or software systems. Whenever someone wants to log in or gain access, they must prove they are who they claim to be. This process is known as authentication, and one of the most common ways to handle it securely is through multi-factor authentication (MFA).

Such factors can be:

- Something you know: for example, a secret password that only you know.

- Something you have: like a physical device or hardware token.

- Something you are: biometric traits such as your fingerprint.

I’ve often blogged about my favorite hardware for proving digital identity: the Trezor Model T. This device combines two factors in one unit and allows you to go passwordless. You simply unlock it on its screen, and then it uses FIDO2/WebAuthn to verify your identity.

FIDO2 and WebAuthn are modern authentication standards designed to reduce the reliance on weak or reused passwords and enhance overall account security.

With this approach, you can establish a truly self-sovereign and decentralized way of identifying yourself online, without relying on centralized services like social login or other single sign-on platforms.

Footnotes

See Digital Integrity - disrupting the personal data economy: Five years in, it is clear the GDPR is not enough ↩︎

Barbapapa © Alice Taylor & Thomas Taylor. All rights reserved. Used with permission. For more information, visit www.barbapapa.com. ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

See also The Mind-Expanding Ideas of Andy Clark. ↩︎

See Making reproducible builds visible: As software controls more and more of our lives, it is becoming ever more important that we have software that we can trust. Trusting the software creators and hoping for the best has not proven effective. When there is open source, automated reviews and code audits, it is possible to verify that that source code can be trusted. But source code does not run on computers; binaries do. The app publishing process must be able to confirm that the binary running on your device matches the trusted source code. This is called reproducible builds. ↩︎

It’s not that the scientific consensus is a good concept in the first place. But if you use it as a proxy for truth, you have to let competition unfold on a level playing field so that new ideas can be tested without prejudice. Relying on consensus will throw us back to pre-modern times, when truth was decided by rhetoric and majority opinion. The scientific revolution - led by figures like Galileo Galilei and Isaac Newton - placed the experiment at the heart of determining truth. Einstein’s relativity theory was validated not because he was a persuasive speaker, but because experiments showed it fit observable reality better than previous models. ↩︎

We will see that an entity is a synonym for a (complex) physical configuration difficult or even impossible to replicate. ↩︎

See: The Value of Values with Rich Hickey: Rich Hickey’s concept of a “value” in functional programming emphasizes immutability and timelessness, which contrasts with the idea of an ever-changing entity that persists through time. ↩︎ ↩︎

What Matters Is the Organism: If you suffer memory loss, you don’t become someone else. You remain you because of the unbroken chain of your body’s existence as a single, living entity. ↩︎